Tracing enables you to monitor and analyze your API Proxy message processing flow in real-time. Trace operations are performed on an API Proxy basis and are activated separately for each API Proxy. This module is particularly used for:Documentation Index

Fetch the complete documentation index at: https://docs.apinizer.com/llms.txt

Use this file to discover all available pages before exploring further.

- Understanding how policies work

- Detecting performance issues

- Debugging

- Examining request/response flow

- Validating transformations

API Proxy-Based Trace

Detailed Tracking

Performance Analysis

Debugging

Starting Trace Mode

Trace mode is started separately for each API Proxy. You can activate trace mode from the API Proxy’s own page.Prerequisites

Before starting trace mode:API Proxy Must Be Loaded

Make Environment Selection

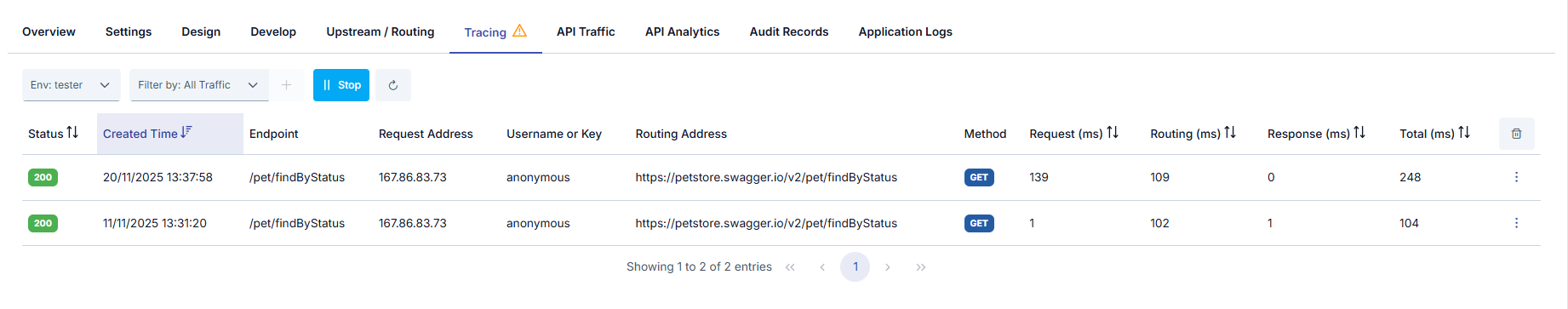

Trace Records

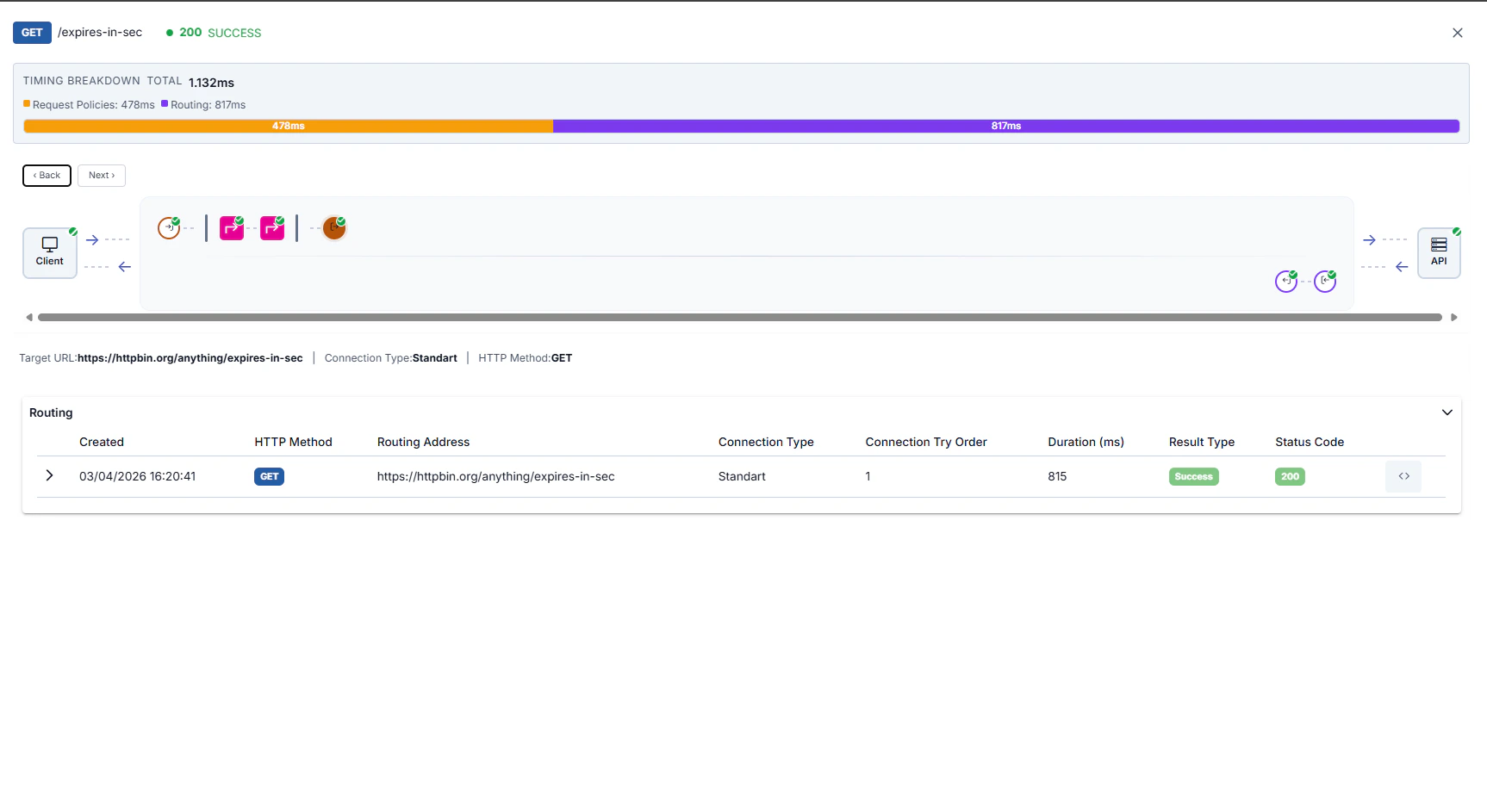

After trace mode is activated, requests coming to the API Proxy are automatically tracked and detailed records are created.

Trace List

The following information is displayed for each record in the trace list:| Information | Description |

|---|---|

| Timestamp | Date and time when the request arrived |

| Method | HTTP method (GET, POST, PUT, DELETE, etc.) |

| Path / Endpoint | Request path and endpoint name |

| Status Code | Response status code (200, 404, 500, etc.) |

| Duration | Total processing time (ms) |

| Policies | Number of policies executed |

| Correlation ID | Request-specific correlation ID |

- Before and after state of the message in the main flow

- Request and response message coming out of the API Call

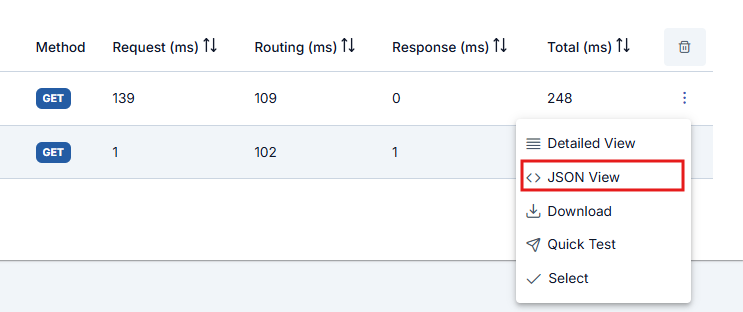

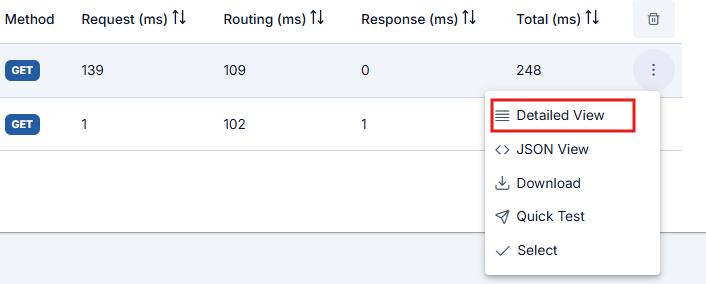

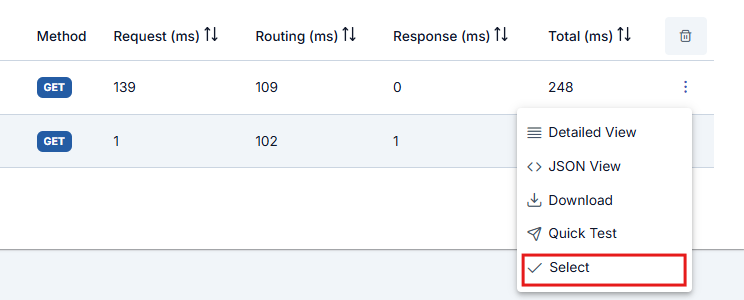

Trace Operations

The following operations can be performed for each trace record:- Detailed viewing

- View in JSON format

- Download

- Quick Test

Tracking Policy Flow

Click the Select button to view the detailed execution information Apinizer keeps for policies run on the API Proxy while step-by-step tracing is on.

- Successful Flow

- Failed Flow

Policy Execution Details

Pre-flow Policies

Pre-flow Policies

- Policy Name: Name of the executed policy

- Execution Time: Policy execution time (ms)

- Status: Success / Failure status

- Changes: Changes made by the policy to the message (header, body, variable changes)

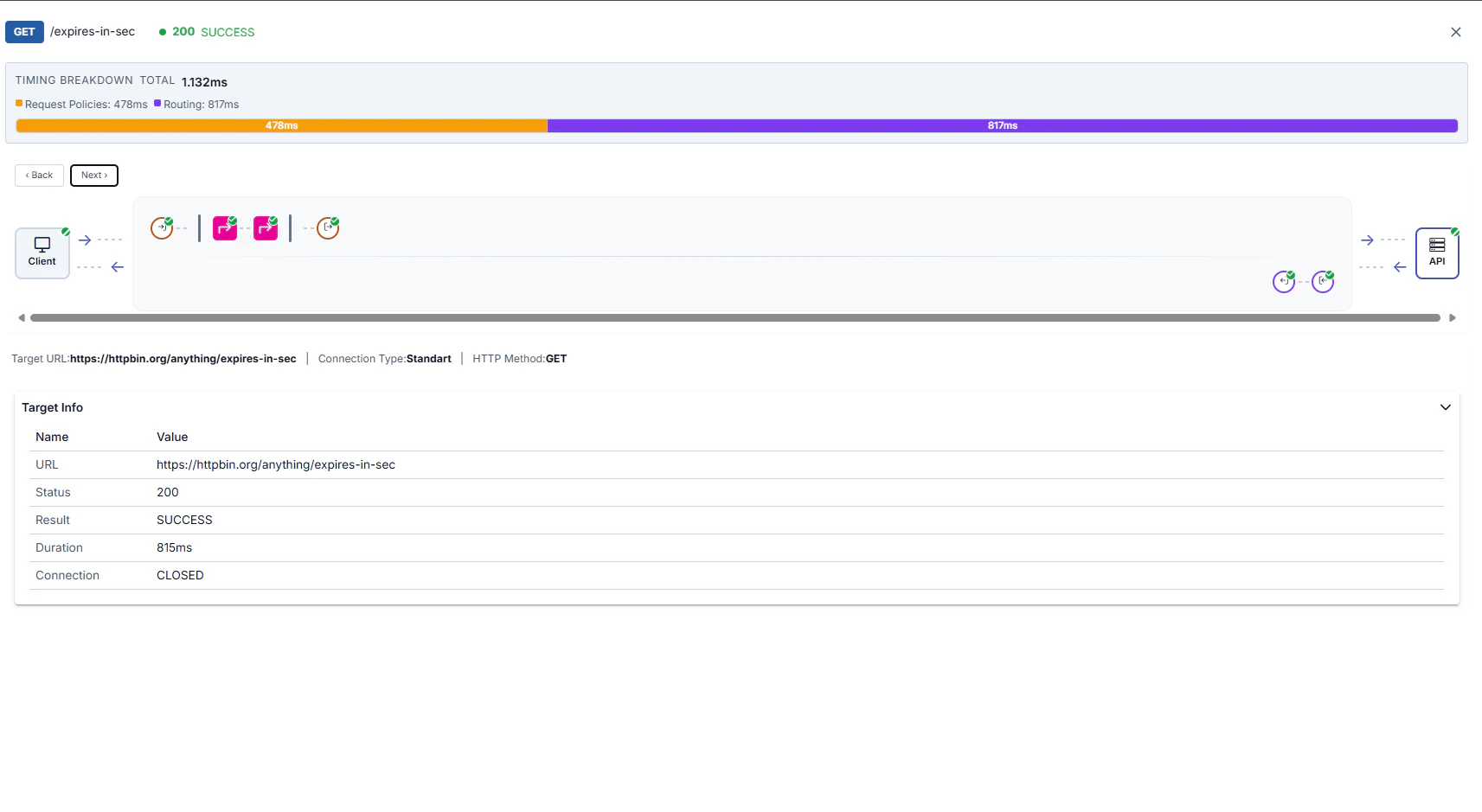

Route Step (Routing)

Route Step (Routing)

- Selected Upstream: Selected upstream target

- Load Balancing Decision: Load balancing algorithm decision

- Connection Time: Connection time to backend (ms)

- Backend Response Time: Backend response time (ms)

- Retry/Failover: Retry or failover status

Post-flow Policies

Post-flow Policies

- Policy Name: Name of the executed policy

- Execution Time: Policy execution time (ms)

- Status: Success / Failure status

- Changes: Changes made to the response message

Fault Handler

Fault Handler

- Error Type: Error type (authentication, routing, policy, etc.)

- Error Message: Error message

- Handler Policies: Executed error handler policies

- Final Response: Final response returned to client

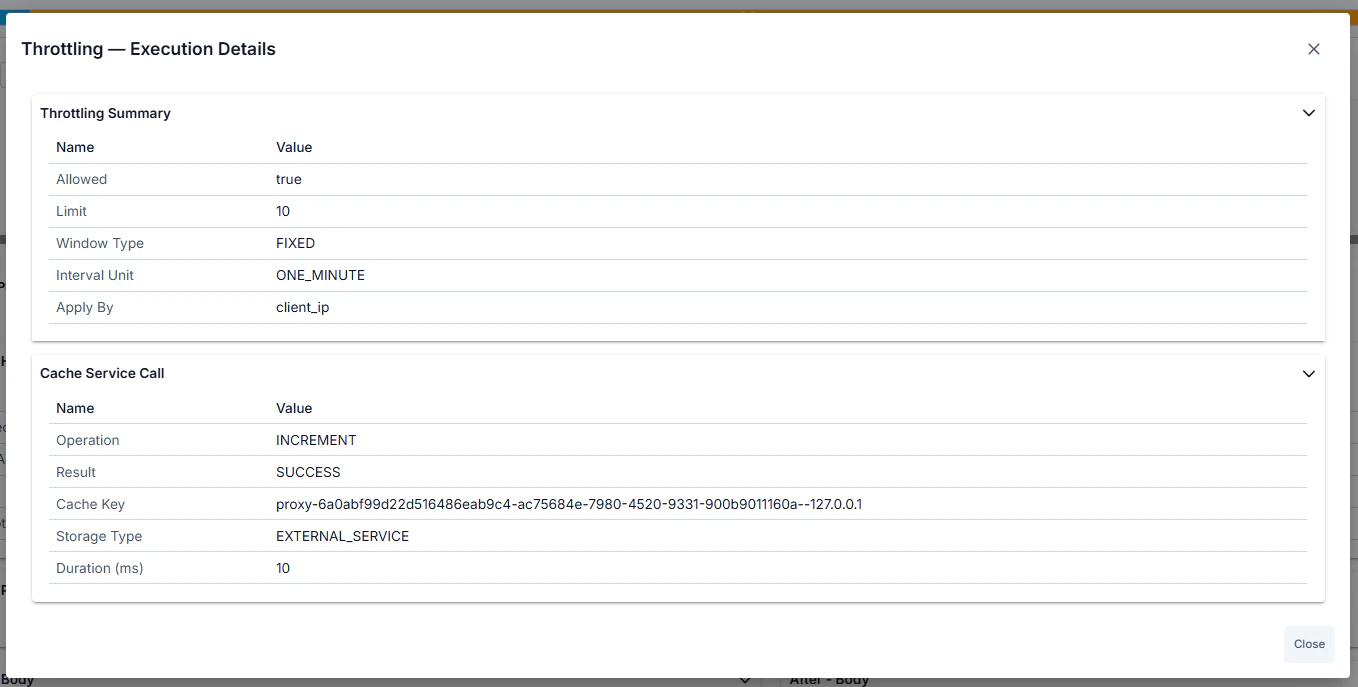

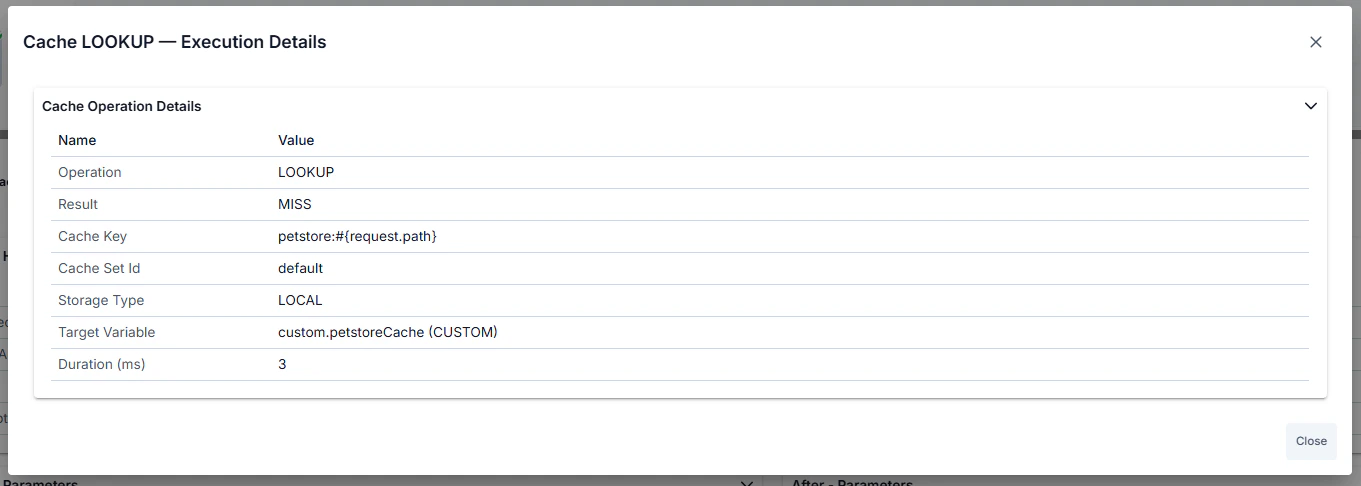

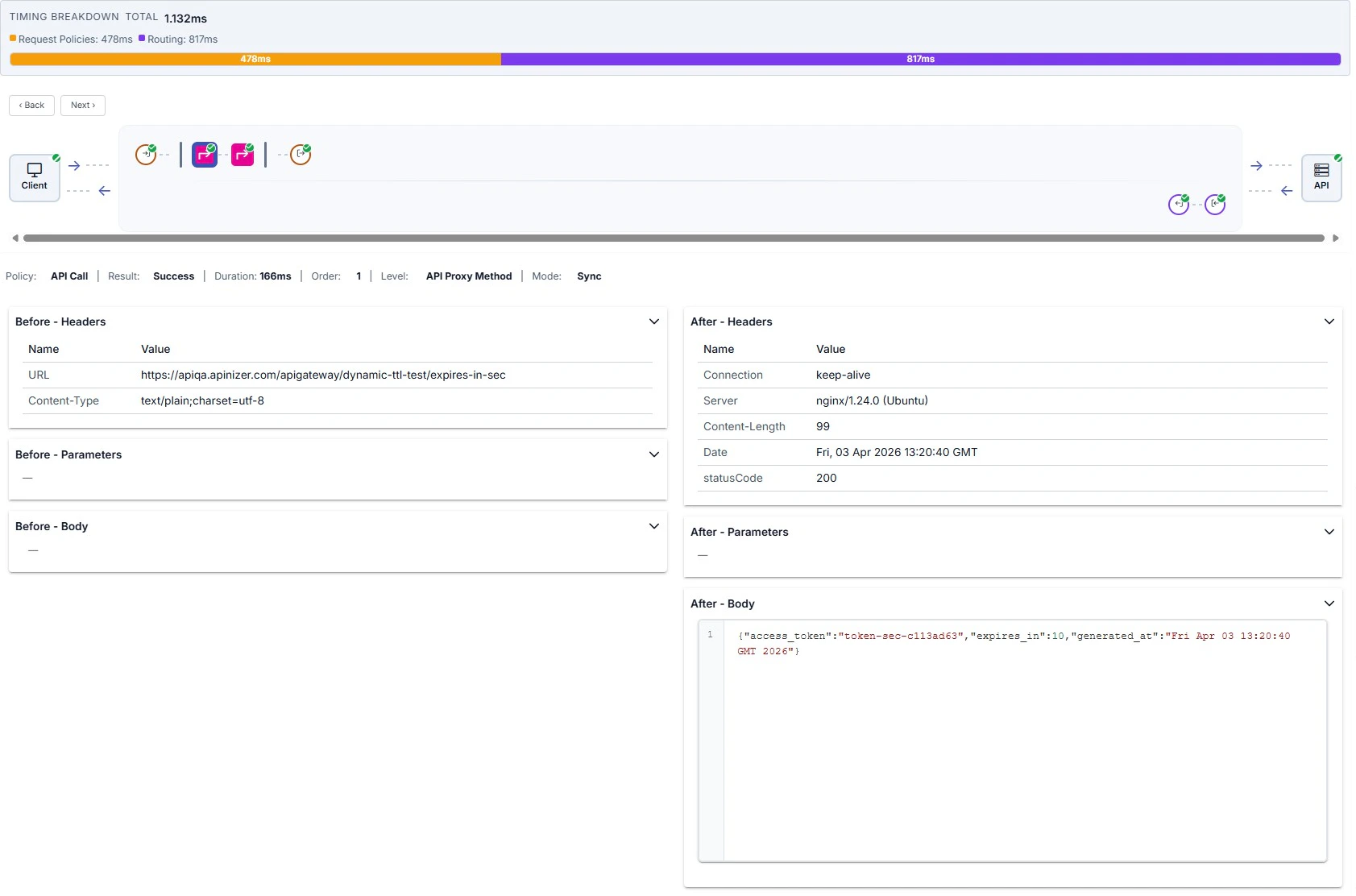

Policy-Specific Execution Details

Certain policy types display an “Execution Details” button in the policy panel header bar. Clicking the button opens a modal with policy-type-specific domain information presented in accordion sections. This detailed view is available on both request-side (pre-flow, request) and response-side (response, post-flow) policy nodes in the trace detail drawer.

- Cache Policy — Operation (LOOKUP/POPULATE/INVALIDATE), cache key, cache set name, hit/miss status (for LOOKUP), source/target variable names, TTL applied, time remaining in cache.

- API Call Policy — Cache status (HIT/MISS/DISABLED), backend service URL, HTTP method and status code, request/response headers, full request/response body, connection time, backend response time, retry/failover details.

- Backend API Authentication Policy — Authentication result (authenticated true/false), extracted username or principal, assigned roles, extracted claims or attributes, HTTP authentication call details (URL, method, headers).

- JOSE Validation / Implementation Dynamic Key Fetch Policy — Key cache lookup status (hit/miss), validation or implementation mode, key extraction path (JSONPath/XPath), key ID (kid), algorithm used, HTTP call details for remote key fetch (if applicable).

- Client Ban Policy — Ban status (banned/not banned), ban source (IP address or other identifier), remaining ban duration, ban reason, cache lookup details.

- API Based Throttling Policy — Current request count and limit threshold, time window, allowed/blocked decision, cache lookup status (hit/miss), sliding window details.

- API Based Quota Policy — Current quota usage and quota limit, period end time, allowed/blocked decision, cache lookup status (hit/miss), reset time for next period.

- Business Rule Policy — Per-action execution list showing: action type, operator, execution status (executed/skipped/error), condition evaluation result, input and output values, execution duration in milliseconds.

Detailed Log Records

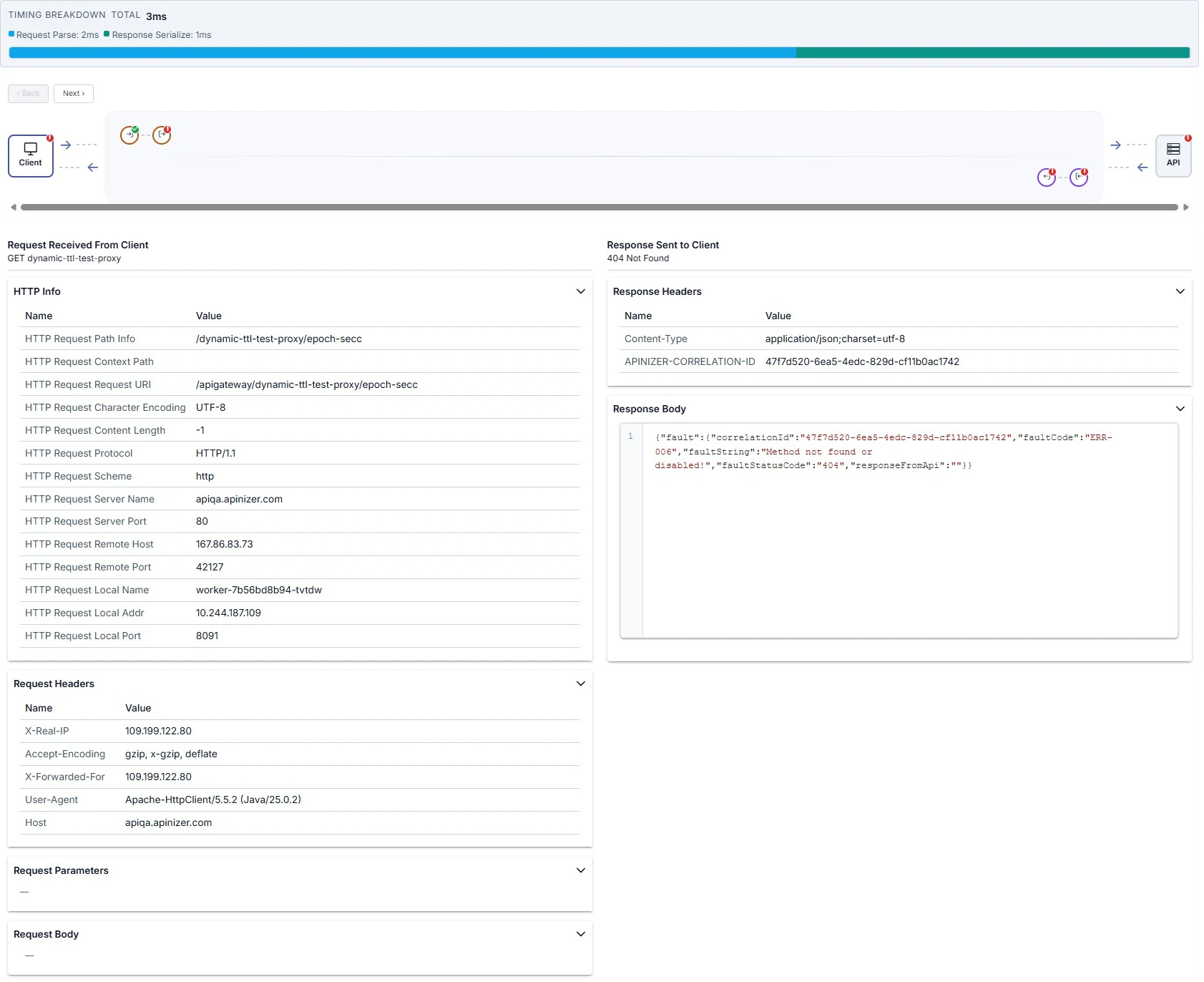

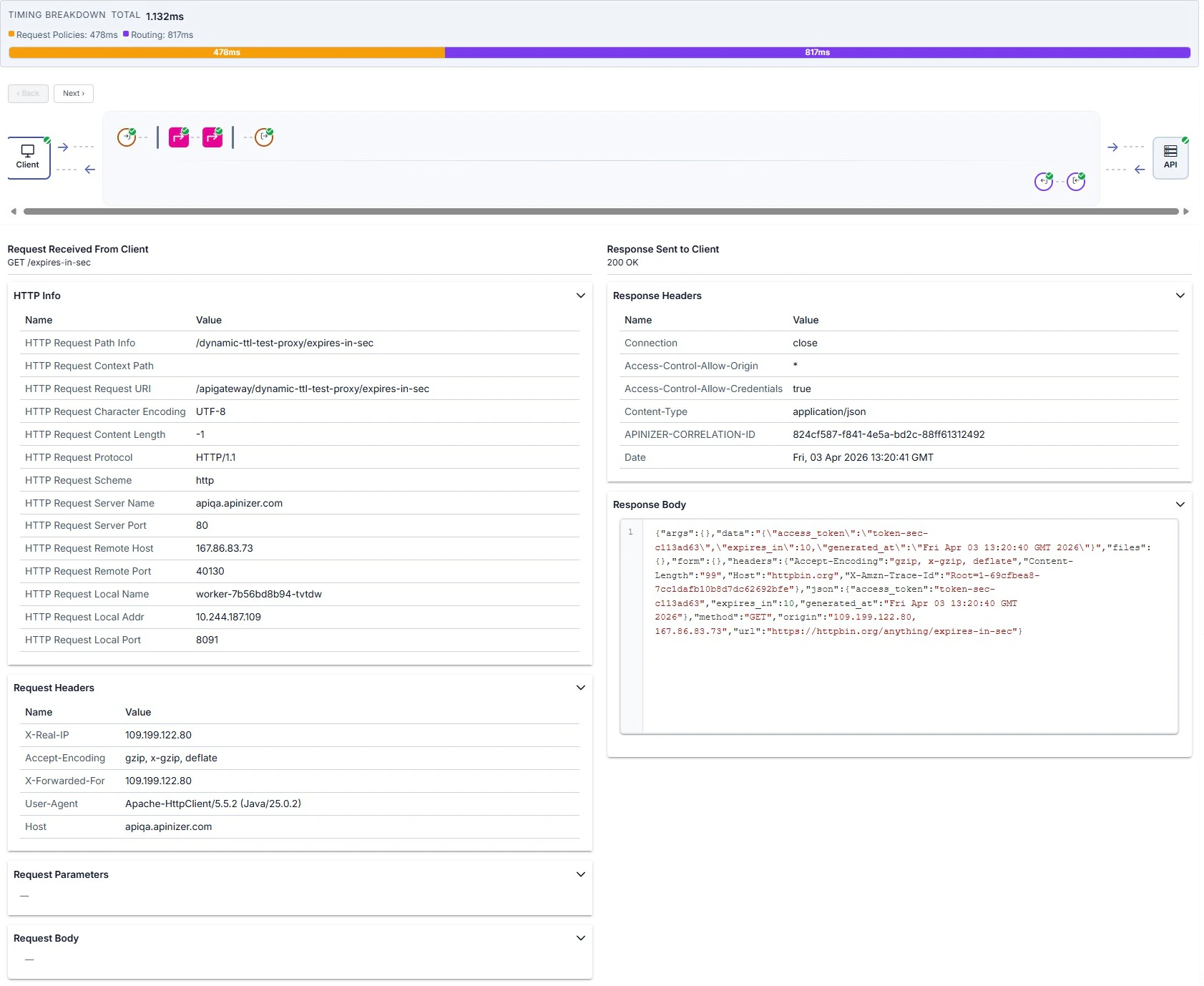

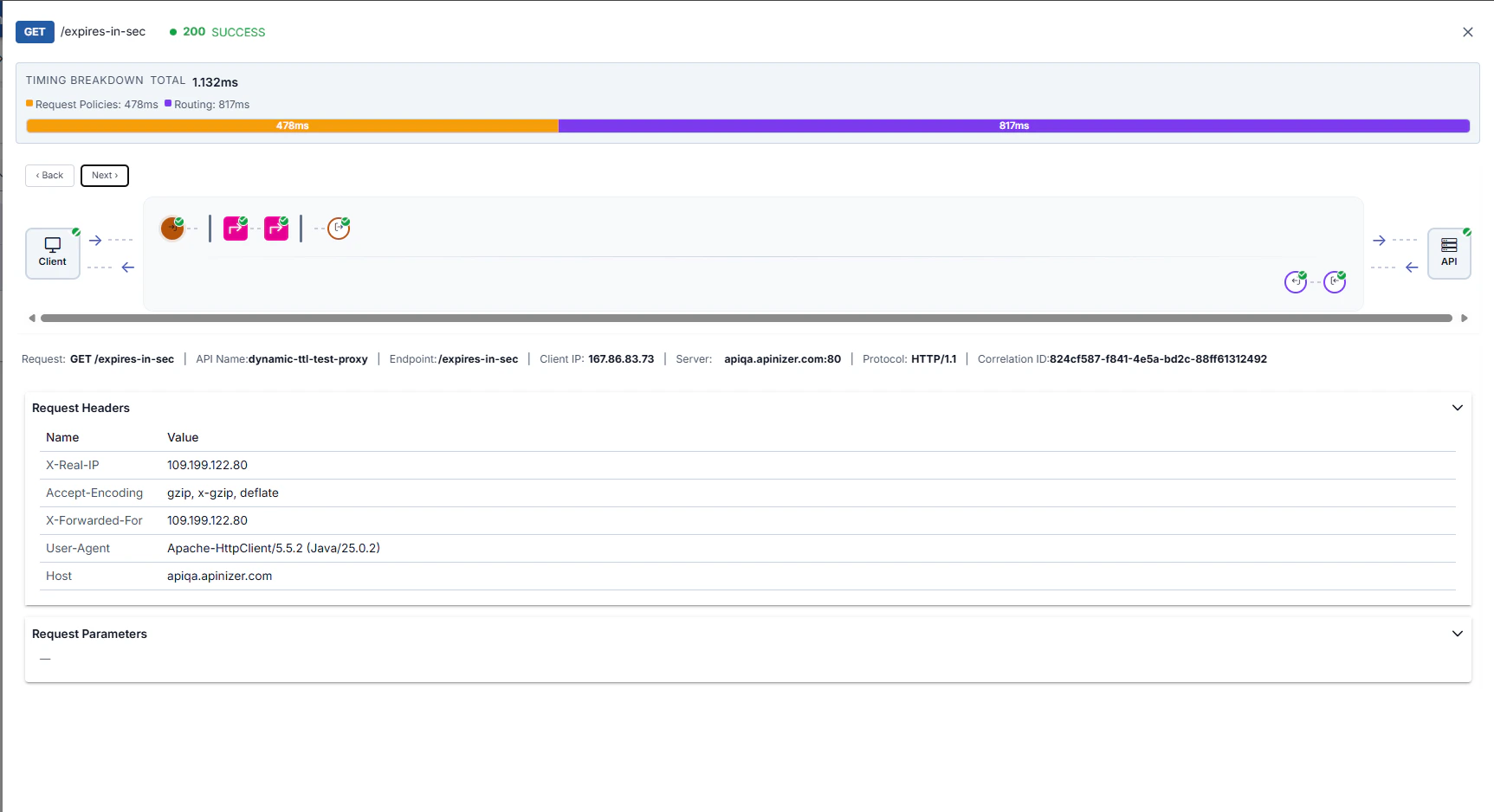

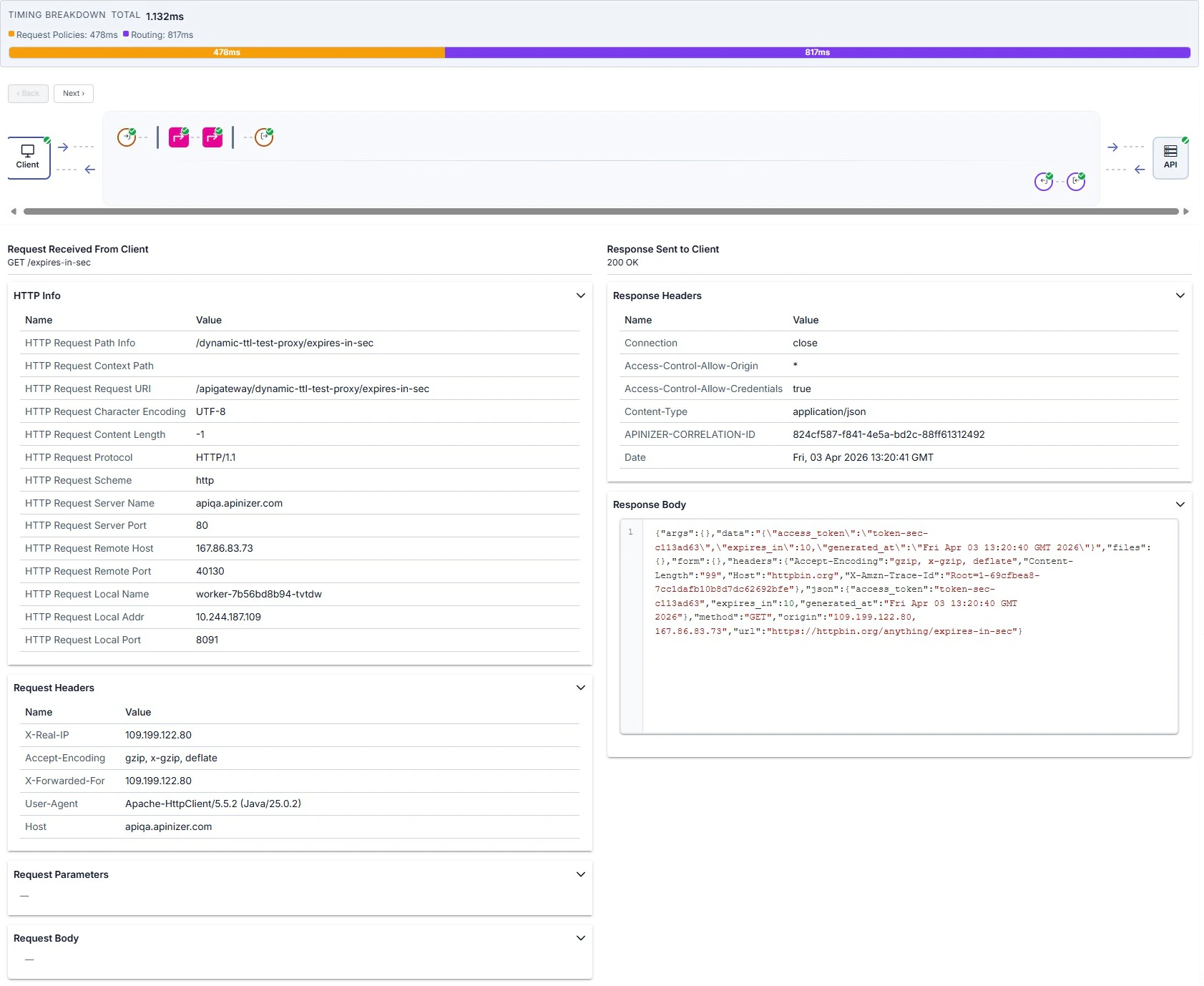

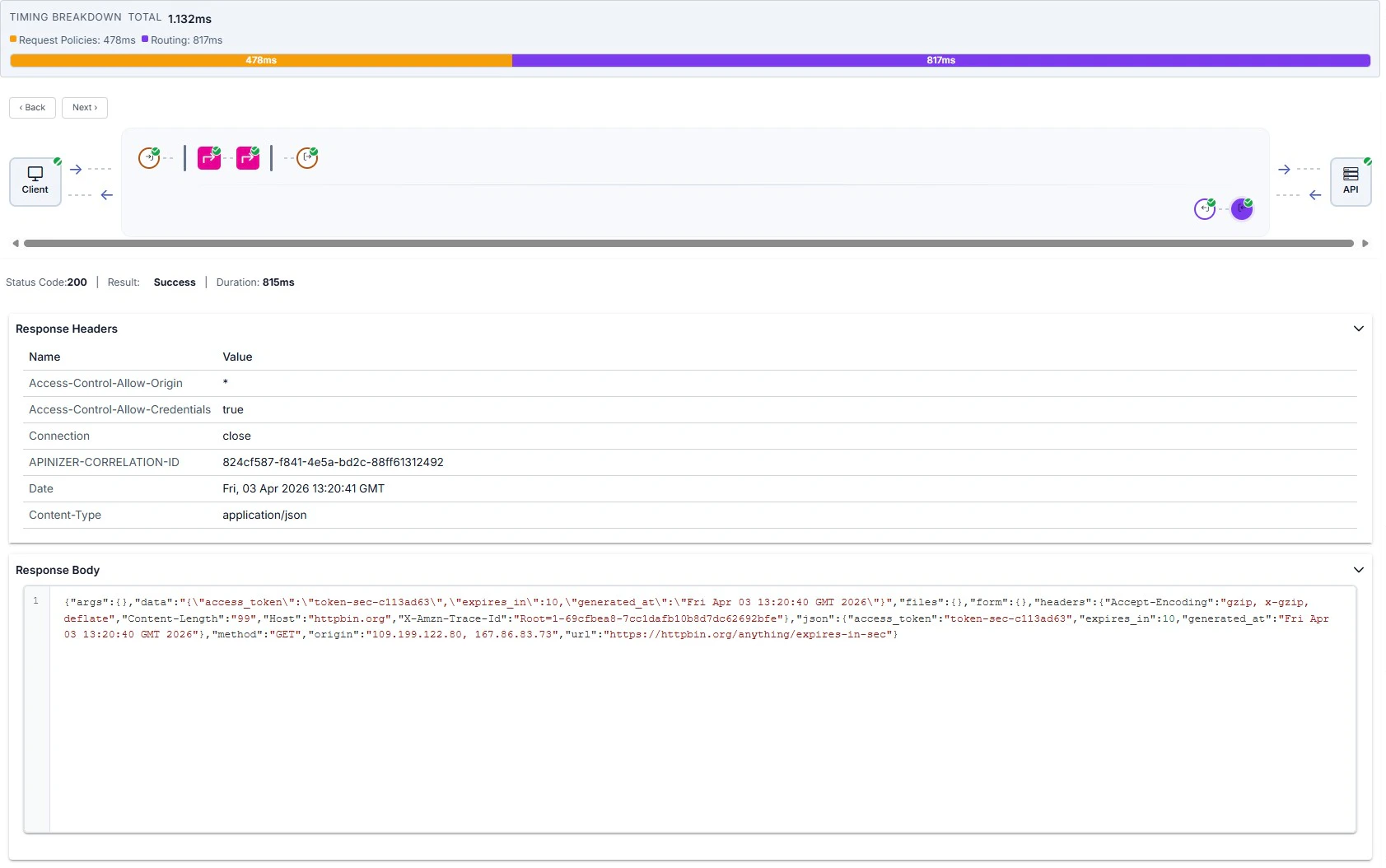

When you select a log row, the drawer summarizes success or failure via the map and header; the lower panel shows tables and bodies for the selected node. Overview example — map, summary line, and request headers:

Client step

Client step

Policy step (Before / After)

Policy step (Before / After)

Backend API and routing

Backend API and routing

Request/Response Comparison

Trace mode shows how the message changes along the flow:Before/After

Transformation Analysis

Header Changes

Body Changes

Performance Analysis

Trace mode provides detailed timing metrics to detect performance issues.Timing Metrics

The following metrics are displayed for each trace record:| Metric | Description |

|---|---|

| Total Duration | Total entry-exit time of the request (ms) |

| Pre-flow Duration | Total execution time of pre-flow policies |

| Route Duration | Connection to backend and response receiving time |

| Backend Duration | Backend API response time (net) |

| Post-flow Duration | Total execution time of post-flow policies |

| Gateway Overhead | Time added by Apinizer Gateway (Total - Backend) |

Policy Performance Analysis

To analyze policy performance:Slowest Policies

Policy Count

Average Policy Duration

Policy Execution Order

- Cache Policy: Use cache to reduce backend calls

- Conditional Flow: Conditionally skip unnecessary policies

- Script Optimization: Optimize slow operations in script policies

- Transformation: Remove unnecessary transformations

Backend Performance Metrics

To monitor backend API performance:| Metric | Description |

|---|---|

| Connection Time | TCP connection time to backend server |

| SSL Handshake Time | SSL handshake time for HTTPS connection |

| Response Time | Backend response generation time |

| Total Backend Time | Connection + Response total time |

| Backend Status | Success status of backend call |

| Retry/Failover Count | Number of retries or failovers performed |

Use Cases

Scenario 1: Performance Issue Detection

Situation: Response times of an API Proxy are higher than expected.Examine Policy Flow

Detect Bottlenecks

- Is backend API slow? → Backend should be optimized

- Are policies slow? → Script/transformation should be optimized

- Is database query slow? → Cache can be used

Scenario 2: Debugging

Situation: Some requests return 500 error and the cause is unknown.Find Policy Where Error Occurred

Examine Policy Details

- Examine the message coming to the policy (Before)

- Read the error message

- Examine detailed log records

Perform Root Cause Analysis

- Is data format wrong?

- Is header missing?

- Is it a script error?

- Is backend unreachable?

Scenario 3: Transformation Validation

Situation: Checking if JSON to XML transformation works correctly.Select Transformation Policy

Compare Before/After

- Before: Incoming JSON message

- After: Converted XML message

- Check if the transformation is correct

Scenario 4: Conditional Flow Testing

Situation: Testing if conditional policies work correctly.Send Requests for Different Conditions

- Request for premium user

- Request for normal user

- Request for guest

Examine Trace for Each Request

Check Condition Evaluation

- What was the condition expression?

- What was the evaluation result?

- Did the correct policies run?

Best Practices

Trace Usage

Use Frequently in Development Environment

- Trace continuously during development

- Always test with trace when adding new policies

- Validate API changes with trace

Be Careful in Production

- Activate trace in production only when necessary

- Trace automatically closes after 5 minutes

- Consider performance impact

Use Custom Query

- Filter with Custom Query from the filter field next to environment selection

- Trace only relevant endpoints

- Minimize unnecessary trace records

Use on API Proxy Basis

- Start trace separately for each API Proxy

- Activate trace mode from the relevant API Proxy’s page

- Trace records are stored in MongoDB

Performance Monitoring

Regular Monitoring:- Run performance trace once a week

- Perform trend analyses

- Detect slowdowns early

- Detect bottlenecks with trace

- Optimize

- Validate improvement with trace

- Document results

Debugging

Systematic Approach:- Isolate the problem (which endpoint, under which condition?)

- Collect detailed information with trace

- Perform root cause analysis

- Fix

- Validate with trace

- Document the process

- Compare input/output messages for each policy

- Detect unexpected changes

- Check transformation correctness